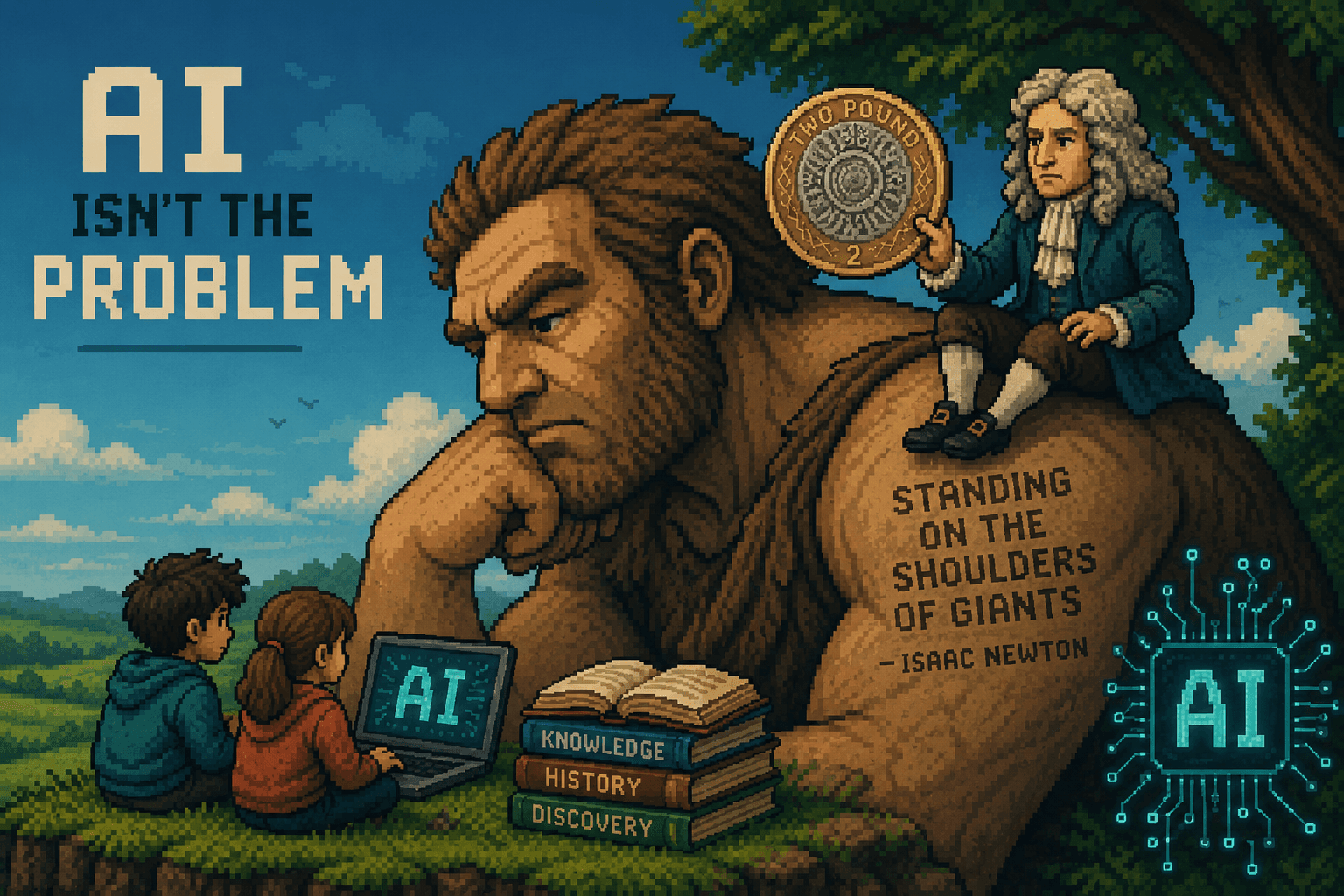

AI isn't the issue…your assessment is.

Two Quid AI: A framework for K-12 assessment in the age of artificial intelligence.

When assessment and AI try to mix, it feels like AI comes off pretty badly. Often it can be seen as the tool that is breaking our assessments, fuelling plagiarism or cheating, and the instrument that is allowing our students to take all of the rigour out of learning. Those things are possible, so it is easy to blame. Yet, AI is definitely here to stay. So as educators, I think we should probably find a way to make assessment and AI start playing more nicely with each other.

In order to do this, I've come up with something called Two Quid AI, which is aimed at educators teaching in K-12 and how they can start to think about age appropriate ways to deliver the AI and assessment mix in their classroom.

Why Two Quid AI?

Really good question. I was inspired to use this concept for a couple of reasons. The first: the British £2 coin design is about the progression of technology, starting with the iron age and ending up at the age of the internet. They may need to now add a fifth ring to signify AI, and if they do, you know who suggested that first. The coin's design is a masterpiece of historical shorthand.

At its centre sit decorative Celtic swirls representing the Iron Age. Moving outward, a series of cogs and wheels mark the Industrial Revolution. The third ring shifts into a silicon chip pattern, signalling the Computer Age, and the outer ring represents the internet as a network of connecting lines.

However, more importantly, along the edge of the coin is a quote from Sir Isaac Newton: 'Standing on the shoulders of giants.' Now, although I do believe this was just a 17th century jab at a shorter contemporary — Newton wrote it to his rival Robert Hooke in 1675, and Hooke was famously short with a significant hunch, so the subtext was less than subtle — this is now rightly used to describe how we can go further and do more by building on the past. It is one of the statements that underpins why literature reviews are so essential to the foundation of any research.

At any rate, the coin inspired me to think about AI and assessment with Newton's giant in mind. I've broken it down into three parts: Struggle, Scaffold and Synthesis. The first stage, the Struggle, represents the climb from the floor to the shoulder. The Scaffold is the vantage point from there —what is currently happening in many of our more progressive classrooms. And the Synthesis is the

very pinnacle: the maximisation of what can be assessed and achieved with the Human and AI mix.

These stages build on the AI Assessment Scale developed by Mike Perkins, Leon Furze and their colleagues, as well as the Digital Education Council's guidance. However, those frameworks were primarily designed for Higher Education. My focus here is squarely on K-12, and ensuring we don't reduce our younger learners' education to a simple input-output equation.

Within this piece I'm going to work through all three stages. Let's start at the bottom, which is exactly where we should.

The Struggle

This for me represents the majority of the giant — and the majority of our assessment with school-aged learners. Simply put, the Struggle is about ensuring there is an appropriate degree of challenge for each individual learner to make progress. AI, for the most part, might not be involved at all in the learning or assessment process for the youngest of pupils, and it can gradually increase as pupils get older. This is not to say that pupils should not learn about AI and develop competencies with it; rather, it should not interfere with their primary learning and knowledge acquisition.

The Biological Basis for the Struggle

To understand why we must protect the Struggle, we need to look at how our brains are actually wired. I think David Geary's work here is important. He is the creator of the distinction between biologically primary and secondary knowledge.

Primary knowledge covers the skills our brains have developed the innate capacity to acquire almost effortlessly — speaking our native language, recognising faces, understanding basic social cues. We don't need a classroom for those; they are innate adaptations.

Secondary knowledge is different. This is cultural information: reading, writing, complex mathematics. Our brains haven't naturally adapted to pick these up on the fly. This is the hard stuff. As David Didau puts it far more eloquently than I ever could: 'Schools exist to teach the hard stuff that children are unlikely to just pick up from their environments.' Secondary knowledge requires formal instruction and effortful practice to become automatic. If we allow AI to bypass the effort required for these acquired skills, we aren't just taking a shortcut — we are failing

to build the very cognitive architecture that schools were designed to create.

The Danger of Shallow Competence

True expertise is about automating the basics to free up working memory for higher-level thinking. If a student hasn't struggled to move knowledge into long term memory, their working memory remains cluttered with the basics, leaving no room for complex synthesis.

Linking this with the understanding of prediction errors aiding learning suggested by Reuter, Borovsky and Lew-Williams (2019), we can see that when a learner is actively predicting in the learning process, their learning is enriched. Dopamine spikes appear when an outcome differs from the prediction made, and new learning takes place. However, in order for this to actually happen, you need to have enough knowledge to make the prediction in the first place. Outsourcing to AI simply doesn't support that process.

There is a real danger in the AI shortcut. If we allow AI to bypass the rigour of the learning process, we leave students with what I'd call Shallow Competence. They can produce a high-quality output, but they have no internal schema to back it up. A student with no internal knowledge creates no expectation. They cannot spot an AI hallucination, they cannot critique a weak argument, and they cannot learn from mistakes because they didn't know enough to make a mistake in the first

place.

There's good evidence of this too. Fen et al. (2024) wrote specifically about learners who regularly used AI and had better quality outputs formatively — through things such as essays — but struggled in summative scenarios and in some cases did decidedly worse than their peers who used more traditional

methods. This isn't surprising. If we kept giving a child a calculator instead of going through the process of arithmetic, not only would we likely find they have no ability to calculate without it, but they'd be less able to spot patterns too.

How much more worrying is this when we apply it to written language and comprehension — the building blocks of how we communicate?

Oakley, Johnston, Chen, Jung and Sejnowski (2025), when writing about the essential need for knowledge in the curriculum, put it plainly: 'Problems arise only when offloading replaces the initial learning process. Experts with solid schemata can safely offload some details, but novices who offload everything never internalise essential knowledge.' Clearly, we need to keep rigour in curriculum and teaching methodologies, to gain an automation or fluency with that content,

before we start any offloading. Doing so before that point simply results in a lack of progress.

AI in the Struggle

Therefore, in this first stage of AI and Assessment, keeping the Struggle is imperative. And if we think of climbing a giant to its shoulder, that would represent the majority of our climb — perhaps 85% or so. So too, I think, should our assessment design, both within class and summatively, ensure that we are assessing knowledge acquisition for the vast majority of the time.

This doesn't mean AI has no role at all at this level. It is always handy having a guide rope on a climb.

If we are utilising AI in the rigour-inducing Struggle phase, we need to make sure that the potential for offloading is greatly reduced by considering AI-resilient design pedagogy. I've really enjoyed the carefully crafted grids by McMinn when thinking about ensuring resilience — having that visual reminder is incredibly useful when planning out activities where AI might be available. For longer pieces, ensure you are creating regular checkpoints, checking for understanding, and challenging learners so you can be confident in their progress within the process.

If there is a chance that ChatGPT is being used, try to move your assessment and feedback from output-focused to process-focused. Better yet, a customised agentic approach gets you there, ensuring a more Socratic method of questioning rather than answer-giving.

One of the ways I've created this is by using a master prompt that allows me to

quickly edit a customised agent for each unit of work. When I build an agent I generally ensure my prompt includes:

• A specific role to play — for these, a 'Socratic teacher with excellent pedagogical understanding for the age group at hand' usually does the trick

• A clear instruction not to give away answers, but always guide through to the solution

• Some prior understanding attained from the learner, which the agent can then build on

• Active engagement through questioning the learner rather than lecturing them

• Scaffolding or stretching based on responses

• Some knowledge context: teaching points from a lesson, curriculum guidance, resources and so on

Once you've built that, you can test and deploy. The AI isn't a source of answers; it is a mirror for the student's own thinking. It keeps the learner in the Struggle while providing just enough rope to keep climbing.

Any platform that offers question-level analysis and a good depth of questions can also support you in this journey. This is why I am such a fan of tools like CENTURY — rooted in retrieval practice, spacing and interleaving, and using AI Socratically to provide feedback and support rather than just answers. It is, for me, a model of what the Struggle stage should look like when technology is involved at all.

The Scaffold

So we've ensured our learners have struggled. We've used platforms and approaches that ensure they know the foundations. Great. But we also have a responsibility to prepare them for a world where AI is a standard colleague. This is the Scaffold — working alongside AI in a modern, meaningful way. And it requires a clear-eyed view of where it is appropriate and where it must still be restricted.

We aren't teaching AI as a separate subject here. We are teaching our subjects — English, Science, History, Maths — through the lens of an AI-augmented reality. The Scaffold is about reaching the height of the giants who came before us. The student isn't just using AI; they are working alongside it. The AI acts as the support structure that allows the student to reach higher-order thinking faster.

Shifting What We Assess

This requires us to change the unit of work we mark. Rather than simply assessing

a final product, we assess the student's ability to direct and refine the tool they

are working with. Here are five key touchpoints for the Scaffold stage:

• Planning: Let them brainstorm with AI, but assess the selection of ideas. Which did they keep? Which did they discard, and why?

• Research: Use AI to find papers, but assess the evaluation of those sources. Can they spot the hallucination or the bias?

• Creation: If they use AI to draft, the hand-in must include the prompt. We assess the prompt engineering as much as the prose.

• Editing: This is my favourite. The student hands in the AI output, their edited version, and a reflection on why they changed what they did.

• Feedback: The student produces a draft, uses AI for a critique, and then submits a second draft. We assess the delta — the improvement between the two.

This way, we aren't just assessing a final product; we are assessing the student's ability to stand on the shoulders of the giant while keeping their own eyes wide open.

The Scaffold in Practice

For younger learners, this doesn't need to be complicated. Imagine a primary class studying animals. We aren't asking the AI to write the report. Instead, the AI provides a scaffolded fact file. The students — who have already struggled to learn about habitats and adaptations in class — must act as Expert Detectives. They highlight what they know to be true, and perhaps more importantly, they look for nonsense or hallucinations. It turns a passive reading task into an active, critical evaluation of a digital source. Even at this age, we are teaching them that they are the master of the machine, not the other way around.

At secondary level, the same principle applies with more sophistication. Take a History class studying Working Conditions during the Industrial Revolution. The AI can be used to bring a persona to life — students can 'interview' a simulated factory worker from 1840. But the assessment isn't the interview itself. The assessment is the comparative analysis: based on the primary sources studied in

the Struggle phase, where did the AI get it right, and where did it romanticise or misrepresent the harsh realities of the Victorian era? By using the AI to synthesise a person, we give students a complex scaffold to push against, using their internal knowledge to critique a sophisticated external model.

Designing for AI Resilience

As we move toward the Synthesis, we need a rubric for our own task design. I suggest looking at every assessment through two lenses: Cognitive Demand and Degree of AI Resilience.

We have to ask: where does our task sit on this matrix? If a task has low AI resilience — meaning the AI can complete it perfectly with a single prompt — we probably shouldn't be setting it as a formal assessment. A standard pop quiz or basic multiple-choice homework sits here. AI tends to do incredibly well. If we set these as high-stakes or graded tasks without process accountability, we aren't assessing the student; we're assessing their ability to copy and paste. The goal is to move our assessments toward the top right: tasks that require high cognitive effort — evaluation, creation, analysis — but are structured in a way that is AI resilient. This means tasks that are hyper-local to your specific classroom, tasks that require personal reflection on the Struggle the student experienced, or tasks that require the student to critique the AI's output. If the AI can do the task for the student, the task is a poor measure of the student's mind.

The Synthesis

Finally, we reach the Synthesis. This is the peak — and it is, I'll be honest, rarely reached in our schools. But I'd love to see it more where possible. This is what it truly means to stand on the shoulders of giants; to see further than anyone else has before.

Synthesis isn't just using AI to finish a task. It is the seamless integration of human intuition and machine intelligence to create something that neither could achieve alone. In our classrooms, this has two key dimensions: using AI to enhance traditional assessment, and making AI itself the focus of the assessment.

AI as Enhancement

When we use AI to enhance traditional subjects — Geography, Art, Business, English — the goal is to push the boundaries of what a student can produce, while ensuring the human element remains the most important part of the equation. We are moving away from the 'AI is cheating' narrative and toward a Practical Real World Application model; preparing them for a workforce where AI fluency will be as fundamental as literacy was for previous generations.

The Digital Education Council's roadmap for high-level integration offers a useful structure here. Methods such as AI-Guided Self-Assessment — where students use a Socratic bot to interrogate their own work — or AI-First, Human Revision — where the challenge is to take a generic machine draft and turn it into something with human soul and accuracy — are both high-leverage and high-agency at once.

AI as the Object of Study

When we move to AI as the key object of study, we are assessing prompt engineering, ethics, and bias awareness. We are looking at the AI as an artefact: something to be dismantled and understood.

My three personal favourites offer the highest cognitive challenge:

• AI as a Simulated Role-Player: Having a student debate a Victorian factory owner or Lady Macbeth forces them to use their internal knowledge to sustain a high-level interaction. A standard AI might just write an essay. A Socratic AI — the kind we should be deploying — refuses to do the work,

and instead asks: 'What does the recurring motif of blood suggest about Lady Macbeth's mental state in Act 5?' The AI isn't a source of answers; it's a mirror.

• Constructive Misuse: Deliberately giving the AI a bad prompt or asking it to perform a task it's poorly suited for, then assessing the student's ability to identify and correct the resulting failures. This is rigorous critical thinking in disguise.

• Artefact Redesign: Taking an AI-generated piece of work and completely redesigning it to fit a specific, localised context that the AI could never fully understand. The more a task is tied to your specific classroom discussion, your local community, or a student's own Struggle log, the more human agency is required to make the AI output useful.

Seven AI Competencies Worth Assessing

To reach this level of Synthesis, we must assess more than just subject knowledge. We need to be assessing these seven key AI competencies:

• Fundamentals and Input Design: Do they understand how the model works, and can they design high-quality prompts to get the best out of it?

• Output Evaluation and Bias Awareness: Can they spot the hallucination? Do they recognise the inherent bias in the data the AI was trained on?

• Integration and Application: Can they take an AI output and apply it to a complex, real-world problem?

• Ethics and Responsible Use: Do they understand the footprint of their digital actions and the importance of academic integrity?

• Reflection and Metacognition: Can they reflect on the process? Can they explain how the AI changed their thinking?

• AI Leverage vs Human Agency: Can they identify when to lean on the machine and when to trust themselves?

• Assessment of the Human Delta: Can they articulate what they brought to the work that the AI could not?

By focusing our assessment on these competencies, we ensure that our students aren't just using AI — they are mastering it. They aren't just looking at the giants; they are seeing beyond them.

Back to the Coin

Let's return to where we started. The design of the two-pound coin is more than just history; it is a blueprint for the future of education. We start at the centre with the Struggle. Just as the Iron Age was our foundation, secondary knowledge — reading, writing, arithmetic — must be internalised. We

cannot skip this. We must protect the cognitive friction that builds the brain's architecture.

We move outward to the Scaffold. This is where we learn to use the cogs and silicon of our era. We integrate AI intentionally — using it to brainstorm, to research, and to critique — but always through the lens of the knowledge we gained in the Struggle.

We finally reach the Synthesis: the outer ring of the network. It is where our students stand on the shoulders of the giants who came before them to see further, think deeper, and create things that were previously impossible.

Our job as educators in the AI era isn't to prevent students from using the outer ring. It is to ensure they have the strength and the wisdom to hold the centre. If we do that, we aren't just teaching for an exam. We are teaching for the next era of human adaptation. And perhaps, if we get it right, we are becoming the giants that the next generation will stand upon.

References

Didau, D. (n.d.). Can we learn from evolutionary psychology? Learning Spy. https://learningspy.co.uk/psychology/can-learn-evolutionary-psychology/

Bhattacharya, B. S., & Bhattacharya, S. (2022). The brain supports adaptive behavior: Learning when reality contradicts expectations. Proceedings of the National Academy of Sciences.

https://www.pnas.org/doi/10.1073/pnas.2117625118

Reuter, T., Borovsky, A., & Lew-Williams, C. (2019). Building meaning: Semantic

complexity and predictive processing. Princeton Baby Lab.

https://babylab.princeton.edu/sites/g/files/toruqf7736/files/documents/reuterborovsk

ylew-williams2019.pdf

Fen, X., et al. (2024). [Study on AI use and formative vs. summative assessment outcomes]. SSRN Working Paper. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5250447

Oakley, B., Johnston, E., Chen, X., Jung, M., & Sejnowski, T. (2025). The Memory Paradox: Why Our Brains Need Knowledge in an Age of AI. SSRN.

Perkins, M., & Furze, L. (2023). AI Assessment Scale. Available via the Digital Education Council.

Digital Education Council. (2024). AI in Higher Education: Guidance and Frameworks.

McMinn, S. AI-Resilient Task Design Grids [Practitioner Resource].

Geary, D. C. (2007). Educating the evolved mind: Conceptual foundations for an evolutionary educational psychology. In J. S. Carlson & J. R. Levin (Eds.), Psychological perspectives on contemporary educational issues. Information Age Publishing.

Miki Devitt

Ashton Edu